One of the areas that has received a ton of attention over the last few decades is the combined fields of behavioral economics and behavioral finance. Like many people whose careers touch on these fields, I have always found them intriguing. It is one of my favorite topics in finance. That said, there are some reasons to be cautious and skeptical with any research finding in finance. This is doubly true for behavioral finance findings.

Potential Concerns

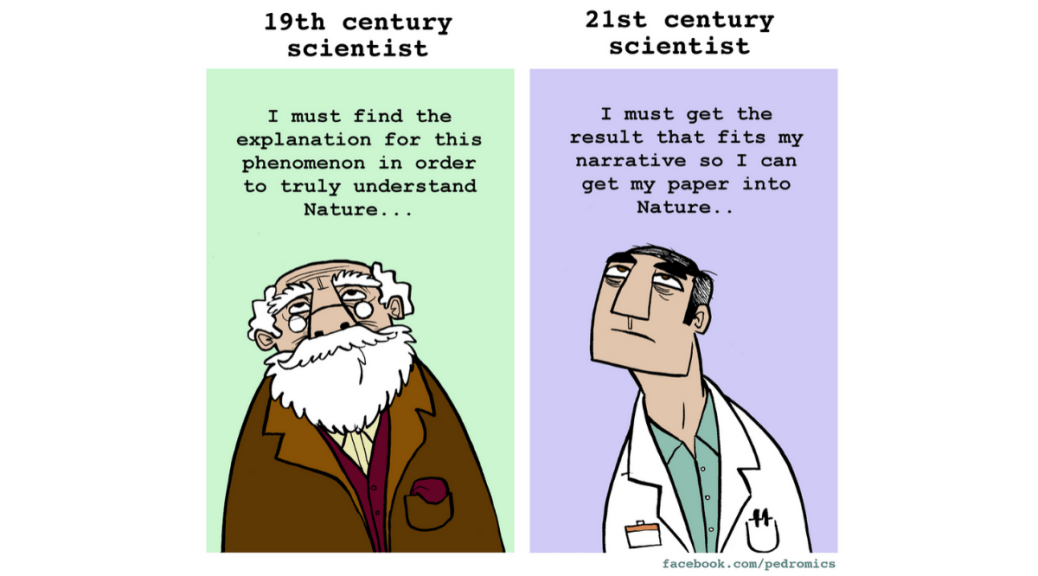

The first issue that comes up ties into the concept of incentives. Academic research, which has been the source of most behavioral findings, is crawling with incentives. Unfortunately, incentives can drive good behavior or bad behavior if we aren’t careful.

There is a well-established replication crisis in the world of research.

The vast majority of psychological research (which is a foundational component of behavioral research) is based on W.E.I.R.D. samples which may have questionable application outside of the environment of the studies.

This doesn’t mean behavioral finance should be ignored. There are legitimate reasons to believe that people are not homo economicus (purely rational economic optimizers) and instead exhibit some predictable biases that distort decision making away from the traditional rational actor models in textbooks. For evidence, let me submit the fact that both of these TV shows shown below actually made it onto network TV! However, it does mean that it is reasonable to exhibit some skepticism about findings from behavioral finance.

What Gets Measured

One of the key measurements of faculty at a majority of business schools is their ability to do quality research. This, in and of itself, is not a bad thing.1 One of the reasons why research often has a higher priority is that it is easier to measure. Statistics like the number of publications in peer-reviewed journals, presentations at academic conferences, number of citations, h-index, and journal quality are all factors that may get considered in tenure and promotion considerations.2 The idea is that these are all specific numbers that can be recorded and compared to rank the quality of one’s research. If it is getting measured, there is an incentive to manage it. One of the most powerful forces in driving behavior would be incentives and they can often drive behavior that was not intended. These are sometimes referred to as perverse incentives.

How do these incentives create the potential for questionable research? Consider the case of a recent controversy around, ironically enough, a paper titled “Signing at the Beginning Makes Ethics Salient and Decreases Dishonest Self-Reports in Comparison to Signing at the End” by Lisa L. Shu, Nina Mazar, Francesca Gino, Dan Ariely, and Max H. Bazerman. The main finding of this study is that signing an honesty pledge at the beginning of a form can be more effective than signing at the end. This seems reasonable from a behavioral perspective as it primes the brain to think about honest from the start rather than after the fact. However, this paper was based on three studies, two involving university employees and students (we’ll come back to this in a bit) and one involving data from an insurance company. Apparently, later attempts to replicate the results from the insurance company were not successful. It turns out that the reason for this is that the data was fake. While this has ultimately been corrected and the paper retracted, it brings to mind two questions. Why were the errors not recognized by one of the five researchers participating on the paper? According to the authors, the two potential sources for the error were Dan Ariely (as he was the person responsible for handling the data) or the someone at the insurance firm who provided the data.3 The second question is how much have the conclusions of this paper been accepted and spread as true? How many of the people who learned the result will know that the paper was retracted as the data used to generate the result was inaccurate and update their knowledge base?

By measuring research publication and incorporating demands for research into performance appraisals, tenure/promotion requirements, grant funding, etc., academic researchers have created an environment where there are incentives to manage the results of these measures. While not focusing on behavioral finance research, this 2019 paper addresses how publication and citation data have become less meaningful in recent years due to the emphasis on these numbers for evaluating faculty. Specifically:

Moreover, recent studies indicate that these metrics have become targets and follow Goodhart’s Law, according to which, “when a measure becomes a target, it ceases to be a good measure.”

Replication Crisis

Over the last 15 or so years, there has been growing concern about the inability to replicate key findings in many studies. Interestingly, while the lack of ability to replicate findings has occurred across a range of areas, these studies tend to center around two general areas — medical studies and psychologically-based studies. Here is a quote from DisplayR’s blog:

Although sometimes referred to as the replication crisis in science, at this stage the replication crisis as currently documented largely exists in:

Medical studies, such as clinical trials (e.g., Ioannidis 2005)

Psychological-style laboratory experiments/survey studies, conducted in the various sub-disciplines of psychology (Collaboration 2015) and related disciplines such as marketing (Armstrong and Green, 2017) and economics (e.g., Camerer et al. 2016)

A little later in the blog, the author (Tim Bock) suggests some possible reasons which tie into two main areas — the incentive problem raised above and technical issues such methodology and data challenges.

A widespread lack of methodological sophistication, with researchers using poorly designed experiments with small sample sizes and inappropriate statistical models (Gelman and Carlin, 2014).

The “publish or perish” economic model of universities, which provides an economic incentive for researchers to publish work showing effects.

Clerical errors in reporting and programming.

Fraud, with research being invented to further career interests or the interests of organizations sponsoring the research.

We talked a bit about the incentive issue and how it drives academic researchers (most of whom are faculty) to publish, even if that is not their primary interest or expertise. The other factor that is harder to get at, especially for those who have not done academic research, are all the technical challenges involved. Remember, this was my career for awhile, so I’ve been (reluctantly) in the trenches. Data is rarely clean. The assumptions behind econometric methodologies are often quite technical and require some subjectivity about which methodology is optimal for the particular situation.

In addition, there is often a significant time lag between the time an idea is generated and the research paper is published. According to this 2013 article, the average time between submission and actual publication in business and economics is nearly 18 months. That is after the research is done and the paper is written! It is not unusual for the actual research to take several months to more than a year (as faculty are often busy with teaching classes, committee work, and other demands in addition to research). In a perfect world, these challenges would not lead to questionable research. However, the reality of the world is that people in all professions are subject to both errors and perverse incentives to varying degrees. People are not perfect.

This is W.E.I.R.D.

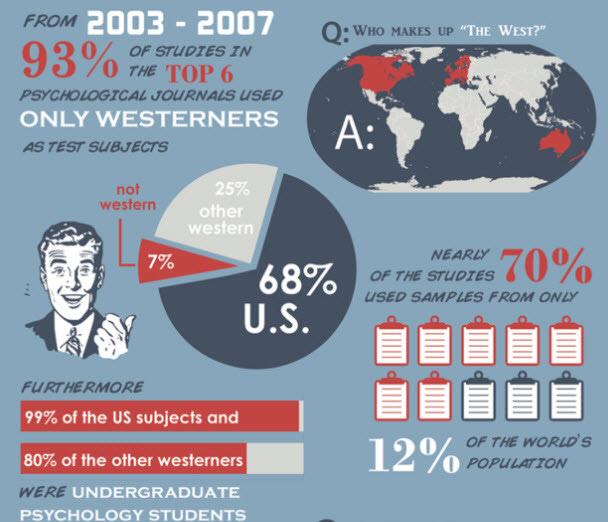

We covered two of the issues with trusting research findings — incentives and technical challenges with data, modeling and econometric techniques. However, there is a third reason that makes psychological, marketing, and economic papers especially challenging. They are W.E.I.R.D. If you have been wondering why I’m abbreviating the word, it is because it is actually an acronym for Western Educated Industrialized Rich and Democratic — in other words, US college students.

According to a 2020 article in Psychology Today,

In the 893 articles published in 2015 and 2016 in the top five cross-cultural psychology journals, a staggering 96.7% participants were WEIRD, and more than 85% were American.

The gist of the argument is that, and brace yourself for this shocking statement, people in different “tribes” behave differently. While there may be some overlap, we should expect people who grew up in Haiti, Beijing, a rough part of a large US city, and someone in the rural Midwest to have developed some noticeably different behavioral characteristics. Moscow, Russia is going to be quite a bit different than Moscow, Iowa.

Why is there such a bias towards doing studies with W.E.I.R.D. samples? Well, imagine you are a faculty member at the University of Iowa. How convenient is it going to be to study students also attending the University of Iowa vs. a cross-sample of people across the globe to represent a truly random sample? Practicality (and cost savings) is going to lead towards research being done on non-representative samples. We also have to recognize that college students are probably not a great match for real-world analysis and extending the results to people in general. If college students are undergrads, they don’t have the same range of life experiences as 35-40 year old mid-career professionals or 50-65 year old individuals in senior positions. The incentives behind the decisions being examined in the experimental study are likely not the same as the incentives driving a person’s decision within a work environment. Creating a Random Control Trial experiment that captures behavior in the real world is going to be extremely difficult.

If we have problems getting a truly representative sample, being able to control for incentives, and getting the environment accurate enough to test for the specific effect we want without any conflicts, does this imply that psychological studies such as many behavioral finance studies should be ignored? The answer is a resounding NO! However, they do need to be treated with some skepticism. Instead of taking a study at face value, we need to think of it as a brick in the wall.

It is incremental new information. However, we establish a foundation not through a single brick, but through many bricks that work together to support our understanding.

Think about how similar the study group and environment is to the situation you are evaluating. Can you count on the behavioral bias that the study identified to behave similarly? Are there several studies documenting the same effect in a similar environment or is it based on a single study that has not been replicated or was done in different conditions?

Also, and this is true for most studies, results represent averages instead of responses from each individual. Don’t assume that because the average person is subject to overconfidence that the situation you are in will see overconfidence play a part. We know that there is a wide amount of variance in most activities where similar inputs provide drastically different outputs. Behavioral biases are likely to follow similar distributions.

I would argue that teaching, especially at the undergraduate and masters levels should be a higher priority, but that could very well be a personal bias of mine. Ideally, faculty would excel at both (and some do), but they are different skill sets and that is a high bar for expectations.

The h-index is a measure of publications with at least h citations. For example, if I have 12 publications and 6 of those publications have 6 or more citations, my h-index would be 6. If all 12 have 12 or more citations, my h-index would be 12.

Note that this is NOT an accusation of either for committing fraud. It is easy to jump to conclusions and have those conclusions be absolutely wrong. Instead, it is a statement that the data clearly had errors that were acknowledged by the authors who retracted the paper.